Trusted by industry leaders

How AI dubbing works

Four stages, each one affecting quality in ways that matter.

Transcription

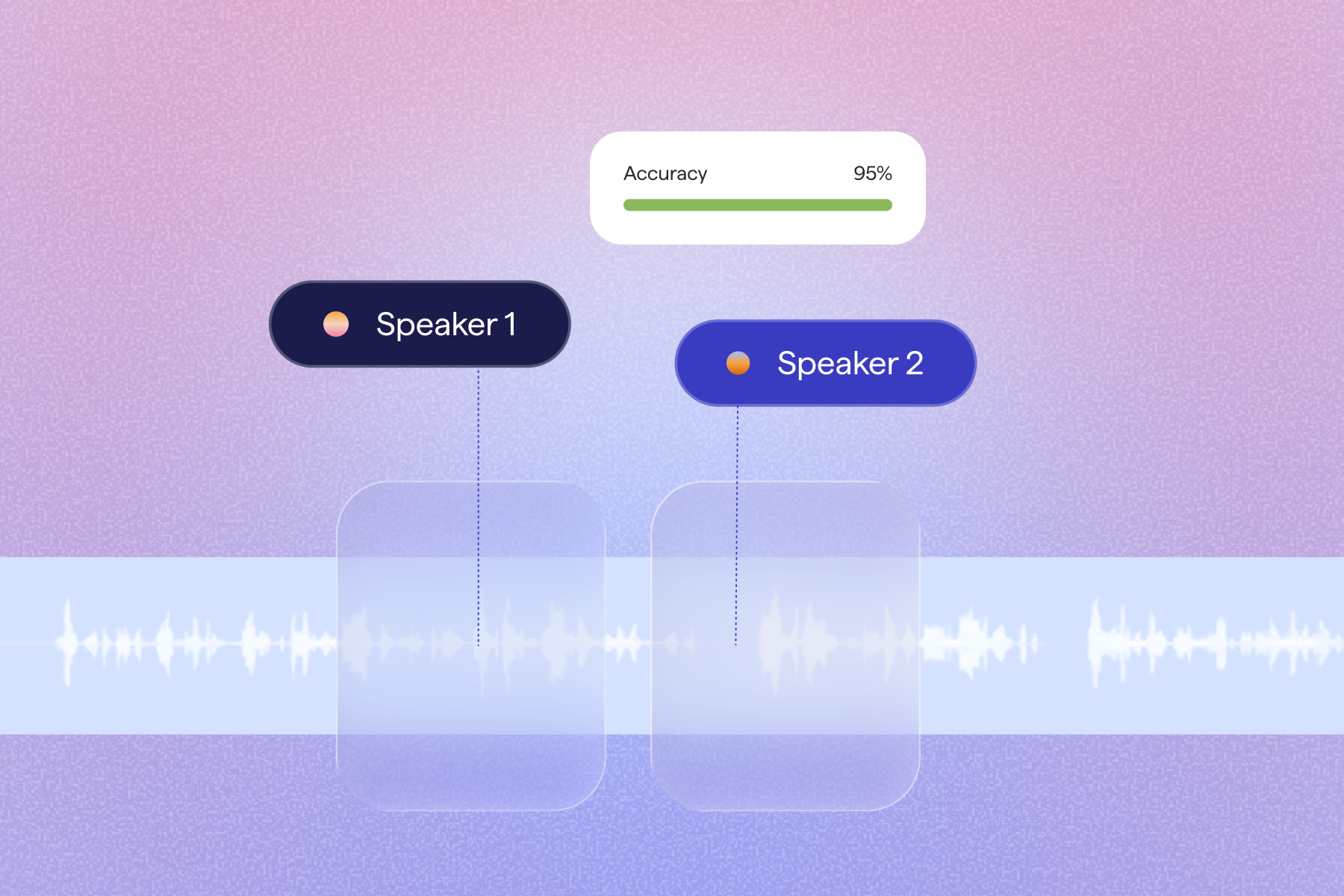

The original audio is transcribed with speaker diarisation. The system identifies who is speaking and when. For multi-speaker content like interviews, panels, or podcasts, this step determines whether the final dub assigns the right voice to the right person.

Translation

The transcript is translated with attention to lip-sync and timing, not just meaning. A translated sentence is often longer than the original. The translation model is constrained to produce output that fits within the original speaker's timing window.

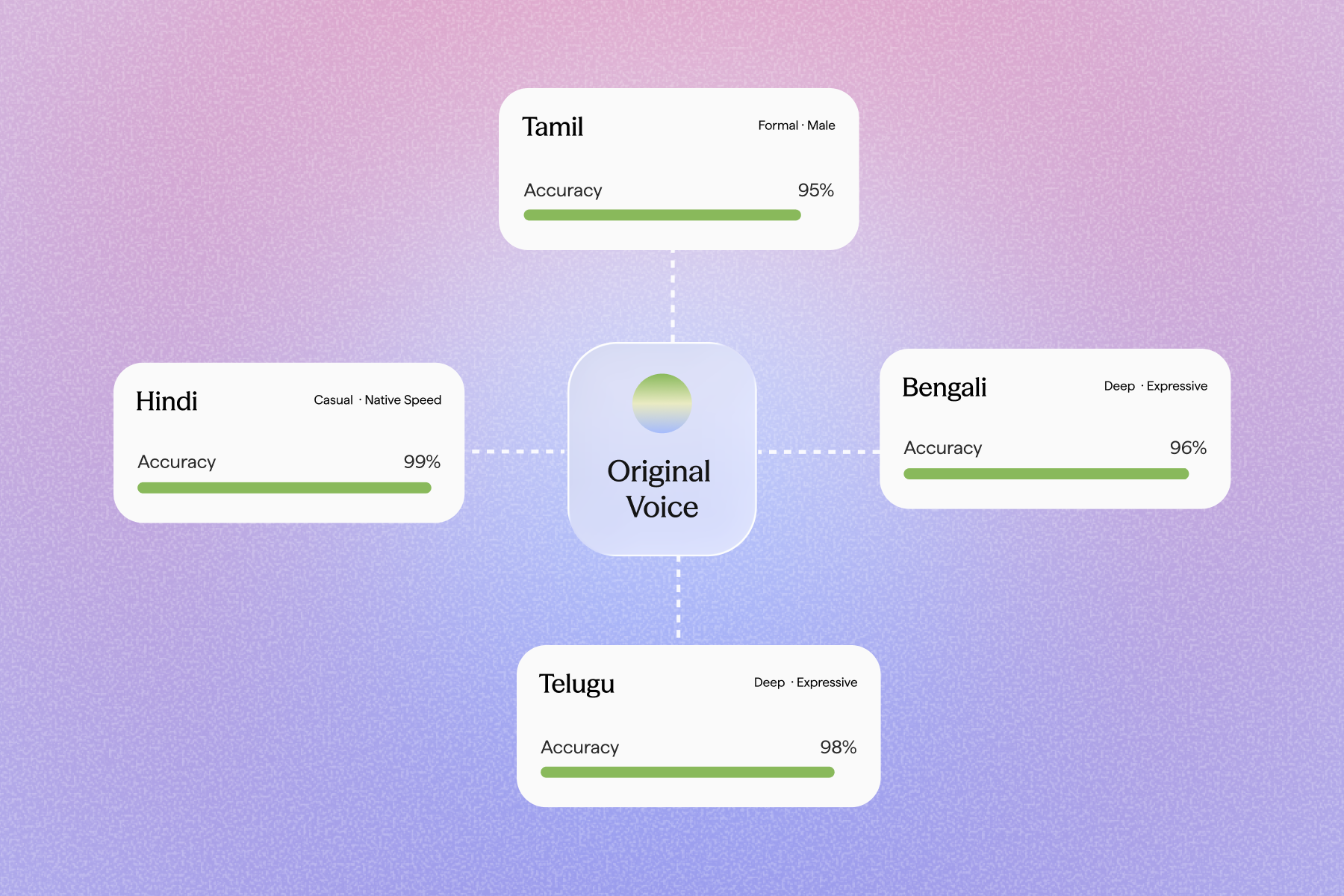

Voice Synthesis

Each speaker's voice characteristics are extracted and used to synthesise the translated audio. The output is not a generic voice reading a translation. It is the original speaker's voice, in a different language.

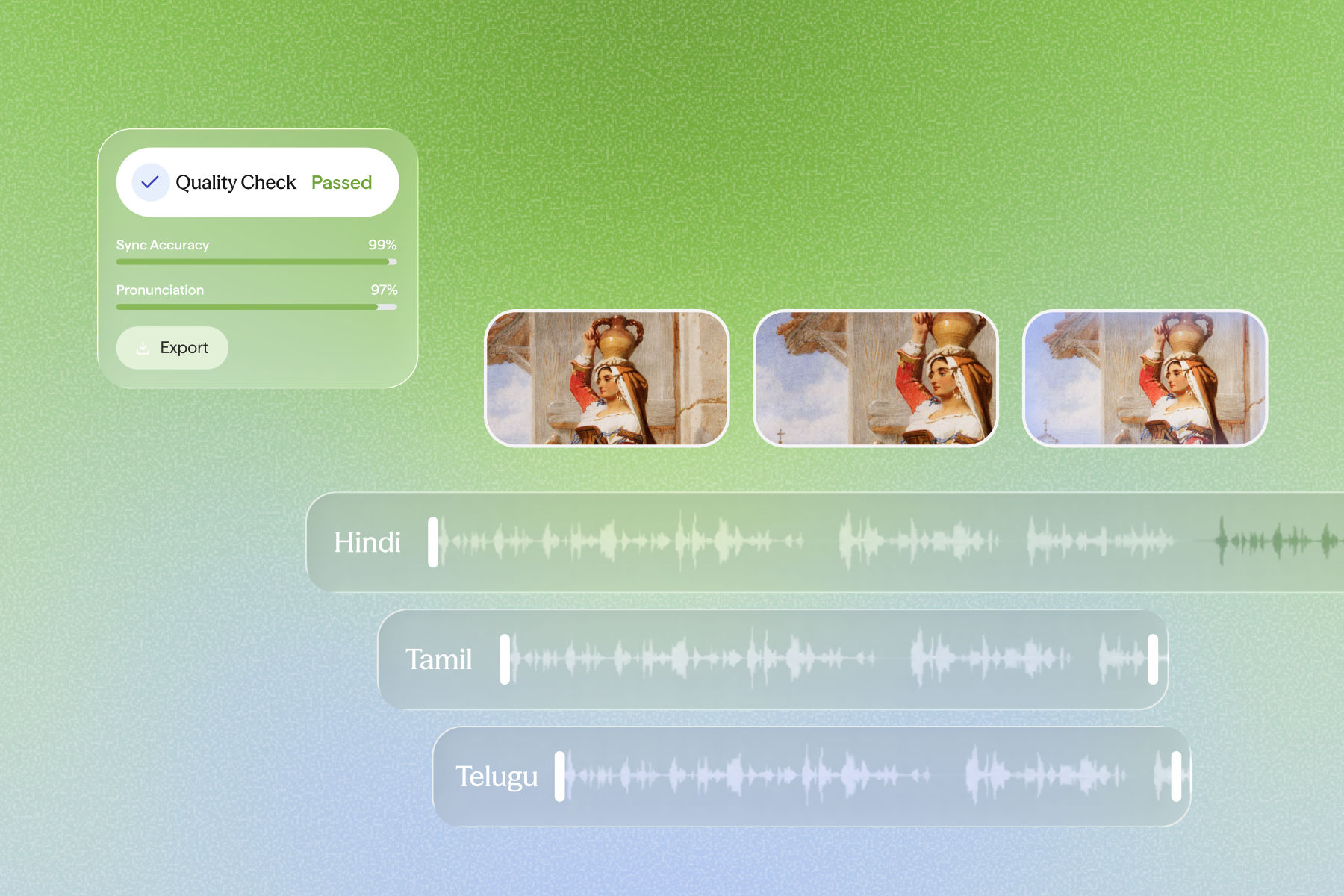

Sync and Export

The synthesised audio is aligned to the video timeline. The final output is a dubbed video file, ready for distribution. Automated quality checks catch errors in translation, sync, and pronunciation before you do.

Dubbing at scale

Built for how India actually sounds

Generic multilingual models were not trained for India. Sarvam was.

Voice preserved, not replaced

With voice cloning, audiences hear the same speaker in every language. Their tone, pacing, and register travel with the translation.

Precise audio-visual sync

Maintain precise timing throughout videos. No drift or mismatch, even for longer videos. Dubbing that fits, not just translates.

Automated quality checks

AI reviews for translation accuracy, sync, and pronunciation. Catch errors before your audience does.

Built on Indian speech data

Hindi, Tamil, Telugu, Kannada, Bengali, Marathi. Each carries its own sound structures and speech rhythms. Sarvam's models know the difference.

Accurate multi-speaker dubbing

Speaker diarisation identifies who says what and when. Interviews, panels, and podcasts are handled without misattribution.

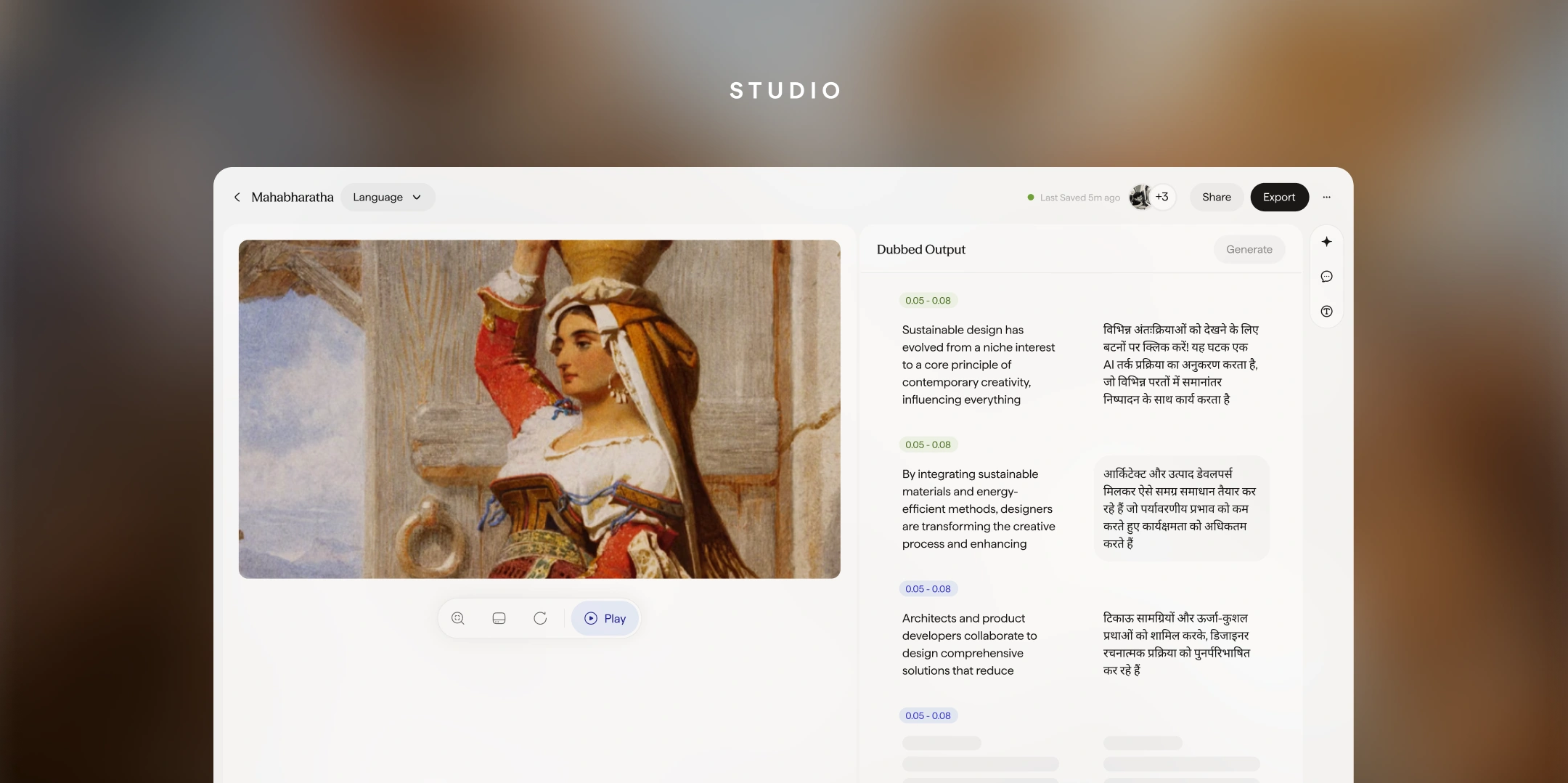

Editable transcript and script

Review and edit both the transcript and translation before audio synthesis. Useful for technical content, proper nouns, and domain-specific terminology.

For every kind of content team

Course creators who produce in English or Hindi can now reach learners in Tamil Nadu, Andhra Pradesh, West Bengal, or Karnataka without re-recording. What took weeks now takes hours.

Podcasters and video creators can distribute their work across language markets without losing what makes their content theirs.

Public awareness campaigns, health information, and civic content can reach citizens in their native language. Not the language of the capital.

Quarterly updates, onboarding videos, and compliance training can be localised for regional offices in hours, not weeks.

Original reporting in one language made accessible across language boundaries, without the cost of a full localisation team.

Dub into 11 Indian languages

Natural, voice-cloned dubbing across the languages your audience speaks.

What to look for in an AI dubbing tool

Not all AI dubbing tools produce the same quality. Here is what separates useful from frustrating.

Voice preservation vs. voice replacement

Look for systems that clone the original speaker's voice, not replace it with a generic one.

Timing fidelity

The dubbed audio should fit within the original speaker's timing window, especially for on-camera content.

Multi-speaker accuracy

Speaker diarisation should correctly assign each voice segment, especially for interviews and panels.

Language-specific quality

What matters is how the model performs on your target Indian language, not how many languages it claims.

Frequently asked questions

India speaks many languages. Your content should too. Start dubbing in Indian languages today.

India speaks many languages. Your content should too.

Start dubbing in Indian languages today.